June 2009

Below we will show audio analogies to multiplying signals versus mixing signals. Before we get into this engineering topic, let's look at a quote from February 13, 2009 by Rush Limbaugh. Rush Limbaugh recently claimed that Democrats:

"have reformatted the [economic recovery] bill -- they've made it a PDF file when they posted it. ... And, so, you can read every page, but you cannot keyword search it. It's not a text file as legislation normally is as posted on these public websites. They don't want anybody knowing what's in this.

Probably most of Rush's audience doesn't know what a "keyword search" is (only a small percentage of EIB radio listeners have dial-up modems in their trailers). But indeed, the Economic Recovery Bill was and is searchable in Portable Document Format. He'll get no mega-dittos from the DITA Nation! Not when you consider that the first initial of DITA stands for Darwin.

Just an observation for the Republican Party... you need to expand your base, not keep playing to a base that is dying off. As the years go by, the percentage of population that is 1. homophobe, 2. racist and 3. computer illiterate is declining.

And congrats to the author of Lies and the Lying Liars Who Tell Them: A Fair and Balanced Look at the Right, as well as Rush Limbaugh Is a Big Fat Idiot, the new Junior Senator from Minnesota, Al Franken! Now we shall return to the topic.

Sub-audio frequency mixing

Here's an everyday example of frequency mixing where you can hear both input signals and the beat frequency (output) signal. We have a page that explains mixer waveforms, you might want to review it. Take two large friends to the local gym, and have them wear converse high-top sneakers. Put them on adjacent treadmills, set one at six miles per hour, the other at seven miles per hour. Their feet should be striking the tread at around three beats per second but at slightly different pace. You might have to have them put on headphones and watch television so they don't synch up to each other. Now listen carefully to the thumping sound... at a periodic interval their Clydesdale footsteps will line up in time and you will hear the "signal":

th-thump th-thump th-thump THUMP THUMP THUMP...

The difference frequency can be determined taking the reciprocal of twice that interval (the beat frequency response is sinusoidal and crosses zero twice in one period). For example, if the interval of THUMP THUMP occurs every ten seconds, the period is 20 seconds, and the beat frequency is 0.05 Hertz. When you are done with this worthy experiment reward your two friends with a plate of donuts, with an extra portion going to the faster athlete. Better still, create a video and send it to us!

Frequency multiplication in audio

Today's lesson is about audio, and a man who won a Grammy for a his feat of audio engineering.

Let's start the discussion with a reference to The Girls Next Door. This is the "reality" show that features Hugh Hefner and several close friends. The theme song is "Come on-a My House". Sorry, this is supposed to be a respectable work-related website, so we won't link to any of the hundreds of videos that feature this theme song. But the song is now seventy years old and we should examine its history.

The product of a pair of Armenian-Americans cousins Rostom Sipan Bagdasarian and William Saroyan, Come on-a My House" was written and performed first in 1939. Saroyan is a famous author, if we have to tell you that, don't take this personally, but you probably won't ever win Jeopardy. Too bad Netflix doesn't offer Human Comedy on DVD, otherwise there would be a shortcut to learning something about Bill Saroyan's work.

In 1951, Come on-a My House was recorded by Rosemary Clooney, under protest. She hated the song so much she almost got fired for refusing to sing it for Mitch Miller. It went number 1 for eight weeks, her biggest hit. When you listen to it, you will understand why.

I'm gonna give you everything...

The next time a song featuring a harpsichord would become mainstream was in 1964 when the Addams family hit television. Perhaps the last great black and white TV comedy.

Neat, Sweet, and Petite

Here's some Trivia: Addams never bothered to name his Esquire cartoon characters, but offered suggestions to ABC when they began producing the series. They used all of his suggestions, except for Gomez and Morticia's son. Addams suggested "Pubert", but that wouldn't play on network TV, so Pubert became Pugsley. In recent times, a second son was born in the movie Addams Family Values, sporting a mustache, and appropriately named Pubert.

So, what does this have to do with frequency conversion? Back to the early 1950s, when Bagdasarian was down on his luck, he bought a $180 tape deck (an new audio technology at the time), which featured multiple recording speeds. In 1958 he recorded The Witch Doctor, which features his voice recorded at slow-tape speed then played back at higher speed. In microwave engineering, when multiplying a signal, all of the signal's harmonic content and bandwidth are preserved. In audio, it does the same thing. Well, not exactly the same thing in Bagdasarian's technique, it compresses the time duration of the signal as well, so Bagdassarian had to sing at reduced tempo. But the harmonic (musical) relationships of tones come through perfectly. In a purely analog world, you could create a time-division multiple access format where each cell call is recorded, then played fast-forward in a time slot. Maybe we should patent that...

The Witch Doctor

Thus the Chipmunks, Alvin, Simon and Theodore, were born. Bagdasarian adopted the stage name David Seville, and the Chipmunks played on with his voices until he died in 1972 at age 52. The Chipmunks live on, as your kids will tell you. Quite probably the voices are done digitally these days, what isn't?

Second topic: frequency shifting to reduce feedback noise

Most people are familiar with the problem of audio feedback. In a sound system, when the microphone gets too close to the speaker, stability is lost and the system starts to howl. This is a big problem for hearing aids, background noises cause instability as the user turns up the gain, and the hearing aid end up whistling and doing more harm than good.

One way to break the feedback loop is to frequency-shift the audio, from the microphone to the speaker. If you moved the sound spectrum entirely up in frequency just a few Hertz, the feedback loop would be broken. In the analog days, many companies, including patent-a-day Bell Labs, studied this problem and offered analog solutions, all of which are inferior to solutions possible in today's digital world.

Analog frequency shifting seems like such an obvious solution, right? Wrong. The problem is twofold: if you shift the signal by just a few Hertz, then the sound becomes unintelligible, because the resulting beat frequency warbles what you are trying to listen to. If you go the other extreme, and choose to mix the signal up by a significant amount, like 20 or even 50 Hertz (at a frequency that warbling could not be detected), the harmonic relationships of the signal spectrum are all messed up. If you shifted music by 20 Hertz up or down, it would sound like an out of tune piano, with the most offensive sounds at the lower range of octaves. If you did it to voice, it makes everyone sound like a soundtrack from a movie involving space aliens. You can't win... or can you?

Here's an MIT thesis we found on the web on the topic, which discusses a few different ways to reduce oscillations due to feedback. It's not as straightforward as you might think, because the audio spectrum contains many octaves, and only the higher frequencies typically produce oscillations. Why is that anyway? At lower frequencies, if the amplifier is inverting (like most amplifiers), the stray signal return will usually come back out of phase and does no harm.

This information came from Allan, in June 2010... thanks!

About 35 years ago I designed a product built around the principles you describe. It was for a voice teleconferencing unit.

I shifted the audio 10Hz up and suppressed the 10 Hz carrier and the unwanted LSB in a double balanced mixer ( I think it was the old Motorola MC1596). The audio was analogue filtered 300-3000Hz, wideband I and Q with passive RC networks. The 10Hz oscillator was a biquad giving I and Q from adjacent outputs.

Despite your worries it worked very well, giving about 10 dB more available sound level at each end before hooting took place, and Neve of Melbourn, England sold a lot of them.

Obviously on music it would sound a bit funny, but with speech it was fine.

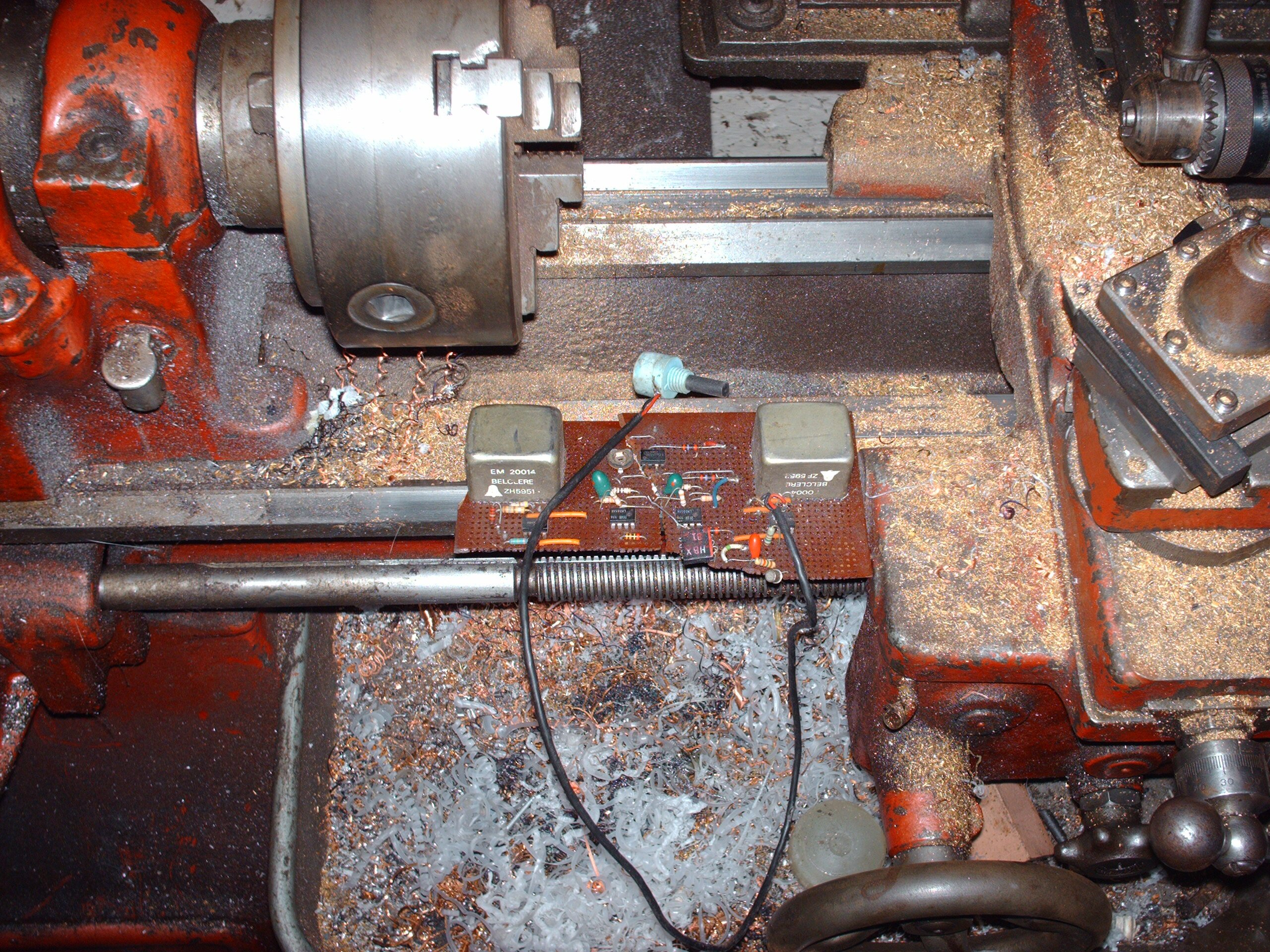

Here is the (very beatup) prototype – note the large audio transformers cased in mumetal.

Prototype audio frequency shifter, on top of ancient South Bend lathe

(click image for closer view!)

Any readers that are interested, feel free to drop us a line if you have comments or questions on this topic!

Check out the Unknown Editor's amazing archives when you are looking for a way to screw off for a couple of hours or more!